Signal vs Noise: Making Smart AI Choices

Since November 2022, the AI landscape has felt chaotic. Every day brings new models, techniques, approaches, and yet another way to apply Large Language Models (LLMs) to solve problems: open source/open weights, foundation models, Retrieval-Augmented Generation (RAGs), and agentic engineering, among many others. For the international development sector, this implies that the risks associated with implementation are enormous. Are we truly investing our limited resources into the correct model, technique, or approach in this ever-changing landscape? Before we invest, we must know if our choices today will become obsolete, unaffordable, or unsafe tomorrow. That’s where an AI Sandbox comes in: more than a playground, it’s a strategic imperative.

What’s in a Sandbox?

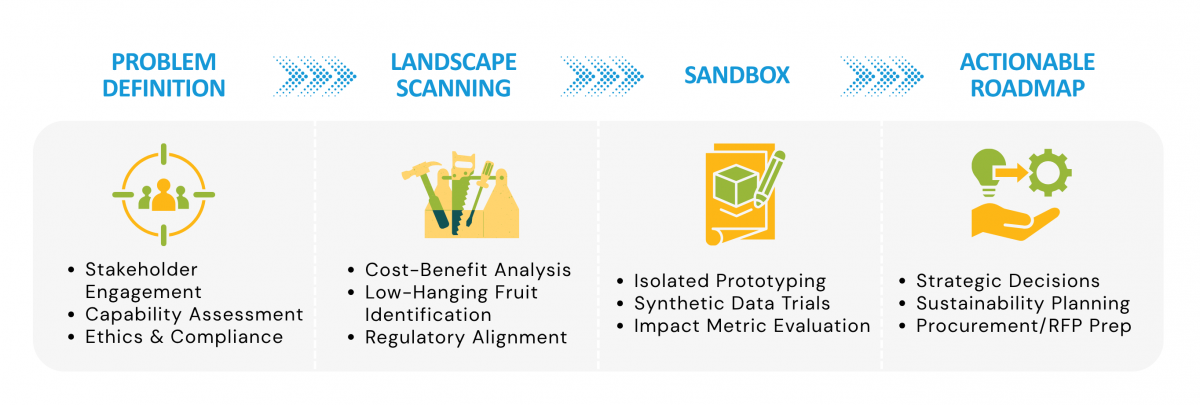

A sandbox is a controlled environment. Here, we test high-risk technology against real-world problems without compromising data, processes, or organizational integrity. We ask difficult questions and test whether a new AI approach works better. By trying and failing quickly and cheaply, we find resilient solutions and move forward. AI remains a volatile investment. Private companies push proprietary black boxes with shifting prices and the risk of digital colonialism at a moment when countries are pushing for digital sovereignty, trading data for speed. We cannot afford speculative pilots that lead nowhere. We must protect every dollar. Sandboxing acts as due diligence. It filters the hype-driven “noise” to find the “signal”: a sovereign, cost-effective, and lasting solution.

Efficiency and Trust

People traditionally measure technology by productivity – doing things faster. But in the public sector and international development, safety matters most. Speed fails to bring sustainability or protect citizen privacy. Development Gateway’s AI Sandbox focuses on two aspects: efficiency and trust. While others openly consider replacing humans with agents, we seek the opposite. We look for use cases where we trust an AI agent to do the heavy lifting – like retrieving and synthesizing data – while keeping the human in the loop. In our framework, the human never acts as a bottleneck. Instead, the human provides verification, direction, and an ethical compass to align outputs with national values and laws.

Building a Roadmap

AI platforms often look great on paper, but often fail to scale for a variety of reasons. Our sandbox approach helps break that cycle. At the heart of our process, we create an actionable roadmap. Local factors ground this roadmap: available computing resources, specific languages, and the long-term survival of the platform and its data processes. We do not just produce a report; we directly support the creation of the technical requirements for a Request for Proposal or provide strategic insight into the direct selection of a technology or vendor, depending on the needs of our partners. We are able to tell ministries and donors: we tested this, we know it works here, we know the costs, and we know where the human must stand to keep it safe. When donor funds run scarce, investing in the wrong signal costs too much. A sovereign AI sandbox ensures we invest in a proven, lasting, and trusted future for our partners.

We are building this Sandbox not just as a series of tools, but as a blueprint for the international development sector. Deciding what’s noise and what’s signal should be a collective challenge that includes sharing successes as well as failures. We’ll share our findings as we go, and hope to receive feedback from anyone who wants to build a resilient, sustainable, and safe AI together.